Hadoop Pig Tutorial: What is Apache Pig? Architecture, Example

We will start with the introduction to Pig

What is Apache Pig?

Pig is a high-level programming language useful for analyzing large data sets. Pig was a result of development effort at Yahoo!

In a MapReduce framework, programs need to be translated into a series of Map and Reduce stages. However, this is not a programming model which data analysts are familiar with. So, in order to bridge this gap, an abstraction called Pig was built on top of Hadoop.

Apache Pig enables people to focus more on analyzing bulk data sets and to spend less time writing Map-Reduce programs. Similar to Pigs, who eat anything, the Apache Pig programming language is designed to work upon any kind of data. That’s why the name, Pig!

In this beginner’s Apache Pig tutorial, you will learn-

Pig Architecture

The Architecture of Pig consists of two components:

-

Pig Latin, which is a language

-

A runtime environment, for running PigLatin programs.

A Pig Latin program consists of a series of operations or transformations which are applied to the input data to produce output. These operations describe a data flow which is translated into an executable representation, by Hadoop Pig execution environment. Underneath, results of these transformations are series of MapReduce jobs which a programmer is unaware of. So, in a way, Pig in Hadoop allows the programmer to focus on data rather than the nature of execution.

PigLatin is a relatively stiffened language which uses familiar keywords from data processing e.g., Join, Group and Filter.

Execution modes:

Pig in Hadoop has two execution modes:

-

Local mode: In this mode, Hadoop Pig language runs in a single JVM and makes use of local file system. This mode is suitable only for analysis of small datasets using Pig in Hadoop

-

Map Reduce mode: In this mode, queries written in Pig Latin are translated into MapReduce jobs and are run on a Hadoop cluster (cluster may be pseudo or fully distributed). MapReduce mode with the fully distributed cluster is useful of running Pig on large datasets.

How to Download and Install Pig

Now in this Apache Pig tutorial, we will learn how to download and install Pig:

Before we start with the actual process, ensure you have Hadoop installed. Change user to ‘hduser’ (id used while Hadoop configuration, you can switch to the userid used during your Hadoop config)

Step 1) Download the stable latest release of Pig Hadoop from any one of the mirrors sites available at

http://pig.apache.org/releases.html

Select tar.gz (and not src.tar.gz) file to download.

Step 2) Once a download is complete, navigate to the directory containing the downloaded tar file and move the tar to the location where you want to setup Pig Hadoop. In this case, we will move to /usr/local

Move to a directory containing Pig Hadoop Files

cd /usr/local

Extract contents of tar file as below

sudo tar -xvf pig-0.12.1.tar.gz

Step 3). Modify ~/.bashrc to add Pig related environment variables

Open ~/.bashrc file in any text editor of your choice and do below modifications-

export PIG_HOME=<Installation directory of Pig> export PATH=$PIG_HOME/bin:$HADOOP_HOME/bin:$PATH

Step 4) Now, source this environment configuration using below command

. ~/.bashrc

Step 5) We need to recompile PIG to support Hadoop 2.2.0

Here are the steps to do this-

Go to PIG home directory

cd $PIG_HOME

Install Ant

sudo apt-get install ant

Note: Download will start and will consume time as per your internet speed.

Recompile PIG

sudo ant clean jar-all -Dhadoopversion=23

Please note that in this recompilation process multiple components are downloaded. So, a system should be connected to the internet.

Also, in case this process stuck somewhere and you don’t see any movement on command prompt for more than 20 minutes then press Ctrl + c and rerun the same command.

In our case, it takes 20 minutes

Step 6) Test the Pig installation using the command

pig -help

Example Pig Script

We will use Pig Scripts to find the Number of Products Sold in Each Country.

Input: Our input data set is a CSV file, SalesJan2009.csv

Step 1) Start Hadoop

$HADOOP_HOME/sbin/start-dfs.sh

$HADOOP_HOME/sbin/start-yarn.sh

Step 2) Pig in Big Data takes a file from HDFS in MapReduce mode and stores the results back to HDFS.

Copy file SalesJan2009.csv (stored on local file system, ~/input/SalesJan2009.csv) to HDFS (Hadoop Distributed File System) Home Directory

Here in this Apache Pig example, the file is in Folder input. If the file is stored in some other location give that name

$HADOOP_HOME/bin/hdfs dfs -copyFromLocal ~/input/SalesJan2009.csv /

Verify whether a file is actually copied or not.

$HADOOP_HOME/bin/hdfs dfs -ls /

Step 3) Pig Configuration

First, navigate to $PIG_HOME/conf

cd $PIG_HOME/conf

sudo cp pig.properties pig.properties.original

Open pig.properties using a text editor of your choice, and specify log file path using pig.logfile

sudo gedit pig.properties

Loger will make use of this file to log errors.

Step 4) Run command ‘pig’ which will start Pig command prompt which is an interactive shell Pig queries.

pig

Step 5)In Grunt command prompt for Pig, execute below Pig commands in order.

— A. Load the file containing data.

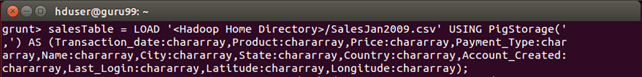

salesTable = LOAD '/SalesJan2009.csv' USING PigStorage(',') AS (Transaction_date:chararray,Product:chararray,Price:chararray,Payment_Type:chararray,Name:chararray,City:chararray,State:chararray,Country:chararray,Account_Created:chararray,Last_Login:chararray,Latitude:chararray,Longitude:chararray);

Press Enter after this command.

— B. Group data by field Country

GroupByCountry = GROUP salesTable BY Country;

— C. For each tuple in ‘GroupByCountry’, generate the resulting string of the form-> Name of Country: No. of products sold

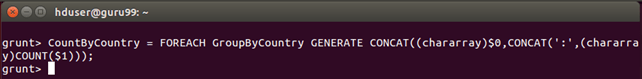

CountByCountry = FOREACH GroupByCountry GENERATE CONCAT((chararray)$0,CONCAT(':',(chararray)COUNT($1)));

Press Enter after this command.

— D. Store the results of Data Flow in the directory ‘pig_output_sales’ on HDFS

STORE CountByCountry INTO 'pig_output_sales' USING PigStorage('\t');

This command will take some time to execute. Once done, you should see the following screen

Step 6) Result can be seen through command interface as,

$HADOOP_HOME/bin/hdfs dfs -cat pig_output_sales/part-r-00000

Results can also be seen via a web interface as-

Results through a web interface-

Open http://localhost:50070/ in a web browser.

Now select ‘Browse the filesystem’ and navigate upto /user/hduser/pig_output_sales

Open part-r-00000