LoadRunner Testing Tool – Components & Architecture

What is LoadRunner?

LoadRunner is a Performance Testing tool which was pioneered by Mercury in 1999. LoadRunner was later acquired by HPE in 2006. In 2016, LoadRunner was acquired by MicroFocus.

LoadRunner supports various development tools, technologies and communication protocols. In fact, this is the only tool in market which supports such a large number of protocols to conduct Performance Testing. Performance Test Results produced by LoadRunner software are used as a benchmark against other tools

LoadRunner Video

Why LoadRunner?

LoadRunner is not only pioneer tool in Performance Testing, but it is still a market leader in the Performance Testing paradigm. In a recent assessment, LoadRunner has about 85% market share in Performance Testing industry.

Broadly, LoadRunner tool supports RIA (Rich Internet Applications), Web 2.0 (HTTP/HTML, Ajax, Flex and Silverlight etc.), Mobile, SAP, Oracle, MS SQL Server, Citrix, RTE, Mail and above all, Windows Socket. There is no competitor tool in the market which could offer such wide variety of protocols vested in a single tool.

What is more convincing to pick LoadRunner in software testing is the credibility of this tool. LoadRunner tool has long established a reputation as often you will find clients cross verifying your performance benchmarks using LoadRunner. You’ll find relief if you’re already using LoadRunner for your performance testing needs.

LoadRunner software is tightly integrated with other HP Tools like Unified Functional Test (QTP) & ALM (Application Lifecycle Management) with empowers you to perform your end to end Testing Processes.

LoadRunner works on a principal of simulating Virtual Users on the subject application. These Virtual Users also termed as VUsers, replicate client’s requests and expect a corresponding response to passing a transaction.

Why do you need Performance Testing?

An estimated loss of 4.4 billion in revenue is recorded annually due to poor web performance.

In today’s age of Web 2.0, users click away if a website doesn’t respond within 8 seconds. Imagine yourself waiting for 5 seconds when searching for Google or making a friend request on Facebook. The repercussions of performance downtime are often more devastating than ever imagined. We’ve examples such as those that recently hit Bank of America Online Banking, Amazon Web Services, Intuit or Blackberry.

According to Dunn & Bradstreet, 59% of Fortune 500 companies experience an estimated 1.6 hours of downtime every week. Considering the average Fortune 500 company with a minimum of 10,000 employees is paying $56 per hour, the labor part of downtime costs for such an organization would be $896,000 weekly, translating into more than $46 million per year.

Only a 5-minute downtime of Google.com (19-Aug-13) is estimated to cost the search giant as much as $545,000.

It’s estimate that companies lost sales worth $1100 per second due to a recent Amazon Web Service Outage.

When a software system is deployed by an organization, it may encounter many scenarios that possibly result in performance latency. A number of factors cause decelerating performance, few examples may include:

- Increased number of records present in the database

- Increased number of simultaneous requests made to the system

- a larger number of users accessing the system at a time as compared to the past

What is LoadRunner Architecture?

Broadly speaking, the architecture of HP LoadRunner is complex, yet easy to understand.

Suppose you are assigned to check the performance of Amazon.com for 5000 users

In a real-life situation, these all these 5000 users will not be at homepage but in a different section of the websites. How can we simulate differently.

VUGen

VUGen or Virtual User Generator is an IDE (Integrated Development Environment) or a rich coding editor. VUGen is used to replicate System Under Load (SUL) behavior. VUGen provides a “recording” feature which records communication to and from client and Server in form of a coded script – also called VUser script.

So considering the above example, VUGen can record to simulate following business processes:

- Surfing the Products Page of Amazon.com

- Checkout

- Payment Processing

- Checking MyAccount Page

Controller

Once a VUser script is finalized, Controller is one of the main LoadRunner components which controls the Load simulation by managing, for example:

- How many VUsers to simulate against each business process or VUser Group

- Behavior of VUsers (ramp up, ramp down, simultaneous or concurrent nature etc.)

- Nature of Load scenario e.g. Real Life or Goal Oriented or verifying SLA

- Which injectors to use, how many VUsers against each injector

- Collate results periodically

- IP Spoofing

- Error reporting

- Transaction reporting etc.

Taking an analogy from our example controller will add the following parameter to the VUGen Script

1) 3500 Users are Surfing the Products Page of Amazon.com

2) 750 Users are in Checkout

3) 500 Users are performing Payment Processing

4) 250 Users are Checking MyAccount Page ONLY after 500 users have done Payment Processing

Even more complex scenarios are possible

- Initiate 5 VUsers every 2 seconds till a load of 3500 VUsers (surfing Amazon product page) is achieved.

- Iterate for 30 minutes

- Suspend iteration for 25 VUsers

- Re-start 20 VUSers

- Initiate 2 users (in Checkout, Payment Processing, MyAccounts Page) every second.

- 2500 VUsers will be generated at Machine A

- 2500 VUsers will be generated at Machine B

Agents Machine/Load Generators/Injectors

HP LoadRunner Controller is responsible to simulate thousands of VUsers – these VUsers consume hardware resources for example processor and memory – hence putting a limit on the machine which is simulating them. Besides, Controller simulates these VUsers from the same machine (where Controller resides) & hence the results may not be precise. To address this concern, all VUsers are spread across various machines, called Load Generators or Load Injectors.

As a general practice, Controller resides on a different machine and load is simulated from other machines. Depending upon the protocol of VUser scripts and machine specifications, a number of Load Injectors may be required for full simulation. For example, VUsers for an HTTP script will require 2-4MB per VUser for simulation, hence 4 machines with 4 GB RAM each will be required to simulate a load of 10,000 VUsers.

Taking Analogy from our Amazon Example, the output of this component will be

Analysis

Once Load scenarios have been executed, the role of “Analysis” components of LoadRunner comes in.

During the execution, Controller creates a dump of results in raw form & contains information like, which version of LoadRunner created this results dump and what were configurations.

All the errors and exceptions are logged in a Microsoft access database, named, output.mdb. The “Analysis” component reads this database file to perform various types of analysis and generates graphs.

These graphs show various trends to understand the reasoning behind errors and failure under load; thus help to figure whether optimization is required in SUL, Server (e.g. JBoss, Oracle) or infrastructure.

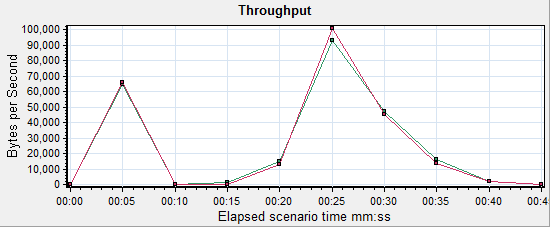

Below is an example where bandwidth could be creating a bottleneck. Let’s say Web server has 1GBps capacity whereas the data traffic exceeds this capacity causing subsequent users to suffer. To determine system caters to such needs, Performance Engineer needs to analyze application behavior with an abnormal load. Below is a graph LoadRunner generates to elicit bandwidth.

How to Do Performance Testing

Performance Testing Roadmap can be broadly divided into 5 steps:

- Planning for Load Test

- Create VUGen Scripts

- Scenario Creation

- Scenario Execution

- Results Analysis (followed by system tweaking)

Now that you’ve LoadRunner installed, let’s understand the steps involved in the process one by one.

Steps involved in Performance Testing process

Step 1) Planning for the Load Test

Planning for Performance Testing is different from planning a SIT (System Integration Testing) or UAT (User Acceptance Testing). Planning can be further divided into small stages as described below:

Assemble Your Team

When getting started with LoadRunner Testing, it is best to document who will be participating in the activity from each team involved during the process.

Project Manager:

Nominate the project manager who will own this activity and serve as point person for escalation.

Function Expert/ Business Analyst:

Provide Usage Analysis of SUL & provides expertise on business functionality of website/SUL

Performance Testing Expert:

Creates the automated performance tests and executes load scenarios

System Architect:

Provides blueprint of the SUL

Web Developer and SME:

- Maintains website & provide monitoring aspects

- Develops website and fixes bugs

System Administrator:

- Maintains involved servers throughout a testing project

Outline applications and Business Processes involved:

Successful Load Testing requires that you plan to carry out certain business process. A Business Process consists of clearly defined steps in compliance with desired business transactions – so as to accomplish your load testing objectives.

A requirements metric can be prepared to elicit user load on the system. Below is an example of an attendance system in a company:

In the above example, the figures mention the number of users connected to the application (SUL) at given hour. We can extract the maximum number of users connected to a business process at any hour of the day which is calculated in the rightmost columns.

Similarly, we can conclude the total number of users connected to the application (SUL) at any hour of the day. This is calculated in the last row.

The above 2 facts combined give us the total number of users with which we need to test the system for performance.

Define Test Data Management Procedures

Statistics and observations drawn from Performance Testing are greatly influenced by numerous factors as briefed earlier. It is of critical significance to prepare Test Data for Performance Testing. Sometimes, a particular business process consumes a data set and produces a different data set. Take below example:

- A user ‘A’ creates a financial contract and submits it for review.

- Another user ‘B’ approves 200 contracts a day created by user ‘A’

- Another user ‘C’ pays about 150 contracts a day approved by user ‘B’

In this situation, User B need to have 200 contracts ‘created’ in the system. Besides, user C needs 150 contracts as “approved” in order to simulate a load of 150 users.

This implicitly means that you must create at least 200+150= 350 contracts.

After that, approve 150 contracts to serve as Test data for User C – the remaining 200 contracts will serve as Test Data for User B.

Outline Monitors

Speculate each and every factor which could possibly affect the performance of a system. For example, having reduced hardware will have potential impact on the SUL(System Under Load) performance.

Enlist all factors and set up monitors so you can gauge them. Here are few examples:

- Processor (for Web Server, Application Server, Database Server, and Injectors)

- RAM (for Web Server, Application Server, Database Server, and Injectors)

- Web/App Server (for example IIS, JBoss, Jaguar Server, Tomcat etc)

- DB Server (PGA and SGA size in case of Oracle and MSSQL Server, SPs etc.)

- Network bandwidth utilization

- Internal and External NIC in case of clustering

- Load Balancer (and that it is distributing load evenly on all nodes of clusters)

- Data flux (calculate how much data moves to and from client and server – then calculate if a capacity of NIC is sufficient to simulate X number of users)

Step 2) Create VUGen Scripts

Next step after planning is to create VUser scripts.

Step 3) Scenario Creation

Next step is to create your Load Scenario

Step 4) Scenario Execution

Scenario execution is where you emulate user load on the server by instructing multiple VUsers to perform tasks simultaneously.

You can set the level of a load by increasing and decreasing the number of VUsers that perform tasks at the same time.

This execution may result in the server to go under stress and behave abnormally. This is the very purpose of the performance Testing. The results drawn are then used for detailed analysis and root cause identification.

Step 5) Results Analysis (followed by system tweaking)

During scenario execution, LoadRunner records the performance of the application under different loads. The statistics drawn from test execution are saved and details analysis is performed. The ‘HP Analysis’ tool generates various graphs which help in identifying the root causes behind a lag of system performance, as well as a system failure.

Some of the graphs obtained include:

- Time to the First buffer

- Transaction Response Time

- Average Transaction Response Time

- Hits Per Second

- Windows Resources

- Errors Statistics

- Transaction Summary