How To Install HBase on Ubuntu (HBase Installation)

Apache HBase Installation Modes

Apache HBase can be installed in three modes. The features of these modes are mentioned below.

1) Standalone mode installation (No dependency on Hadoop system)

- This is default mode of HBase

- It runs against local file system

- It doesn’t use Hadoop HDFS

- Only HMaster daemon can run

- Not recommended for production environment

- Runs in single JVM

2) Pseudo-Distributed mode installation (Single node Hadoop system + HBase installation)

- It runs on Hadoop HDFS

- All Daemons run in single node

- Recommend for production environment

3) Fully Distributed mode installation (MultinodeHadoop environment + HBase installation)

- It runs on Hadoop HDFS

- All daemons going to run across all nodes present in the cluster

- Highly recommended for production environment

For Hadoop installation Refer this URL Here

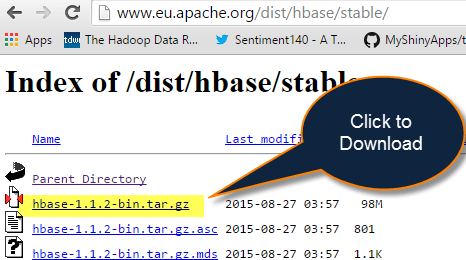

How to Download HBase tar file stable version

Step 1) Go to the link here to download HBase. It will open a webpage as shown below.

Step 2) Select stable version as shown below 1.1.2 version

Step 3) Click on the hbase-1.1.2-bin.tar.gz. It will download tar file. Copy the tar file into an installation location.

How To Install HBase in Ubuntu with Standalone Mode

Here is the step by step process of HBase standalone mode installation in Ubuntu:

Step 1) Place the below command

Place hbase-1.1.2-bin.tar.gz in /home/hduser

Step 2) Unzip it by executing command $tar -xvf hbase-1.1.2-bin.tar.gz.

It will unzip the contents, and it will create hbase-1.1.2 in the location /home/hduser

Step 3) Open hbase-env.sh

Open hbase-env.sh as below and mention JAVA_HOME path in the location.

Step 4) Open the file and mention the path

Open ~/.bashrc file and mention HBASE_HOME path as shown in below

| export HBASE_HOME=/home/hduser/hbase-1.1.1 export PATH= $PATH:$HBASE_HOME/bin |

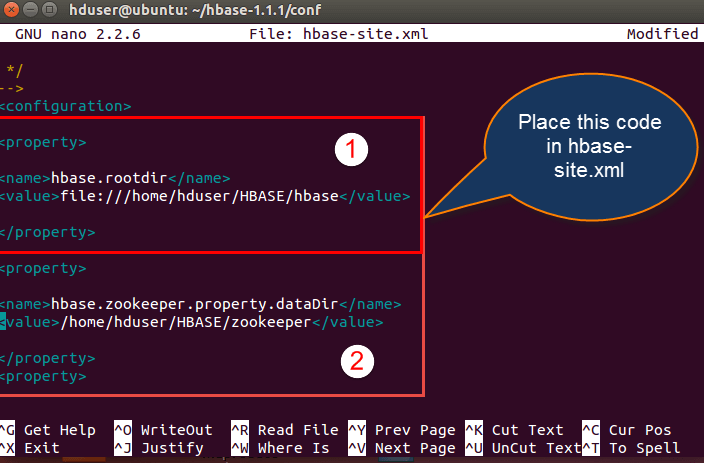

Step 5) Add properties in the file

Open hbase-site.xml and place the following properties inside the file

hduser@ubuntu$ gedit hbase-site.xml(code as below)

<property> <name>hbase.rootdir</name> <value>file:///home/hduser/HBASE/hbase</value> </property> <property> <name>hbase.zookeeper.property.dataDir</name> <value>/home/hduser/HBASE/zookeeper</value> </property>

Here we are placing two properties

- One for HBase root directory and

- Second one for data directory correspond to ZooKeeper.

All HMaster and ZooKeeper activities point out to this hbase-site.xml.

Step 6) Mention the IPs

Open hosts file present in /etc. location and mention the IPs as shown in below.

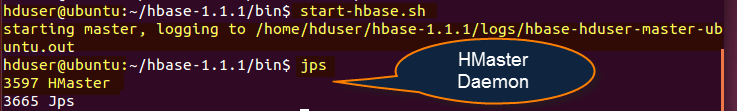

Step 7) Now Run Start-hbase.sh in hbase-1.1.1/bin location as shown below.

And we can check by jps command to see HMaster is running or not.

Step 8) Start the HBase Shell

HBase shell can start by using “hbase shell” and it will enter into interactive shell mode as shown in below screenshot. Once it enters into shell mode, we can perform all type of commands.

The standalone mode does not require Hadoop daemons to start. HBase can run independently.

HBase Pseudo Distributed Mode of Installation

This is another method for Apache HBase Installation, known as Pseudo Distributed mode of Installation.

Below are the steps to install HBase through Pseudo Distributed mode:

Step 1) Place hbase-1.1.2-bin.tar.gz in /home/hduser

Step 2) Unzip it by executing command$tar -xvf hbase-1.1.2-bin.tar.gz. It will unzip the contents, and it will create hbase-1.1.2 in the location /home/hduser

Step 3) Open hbase-env.sh as following below and mention JAVA_HOME path and Region servers’ path in the location and export the command as shown

Step 4) In this step, we are going to open ~/.bashrc file and mention the HBASE_HOME path as shown in screen-shot.

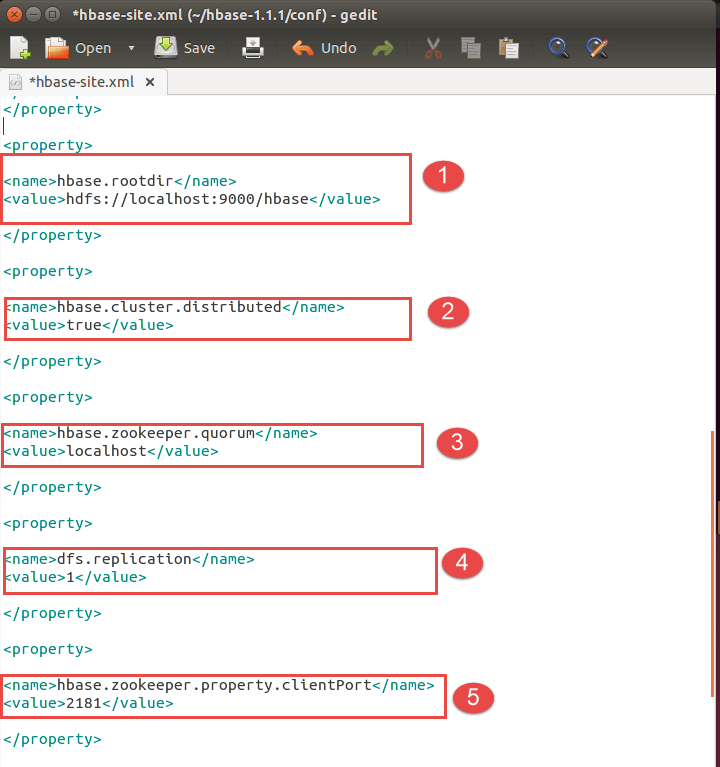

Step 5) Open HBase-site.xml and mention the below properties in the file.(Code as below)

<property> <name>hbase.rootdir</name> <value>hdfs://localhost:9000/hbase</value> </property> <property> <name>hbase.cluster.distributed</name> <value>true</value> </property> <property> <name>hbase.zookeeper.quorum</name> <value>localhost</value> </property> <property> <name>dfs.replication</name> <value>1</value> </property> <property> <name>hbase.zookeeper.property.clientPort</name> <value>2181</value> </property> <property> <name>hbase.zookeeper.property.dataDir</name> <value>/home/hduser/hbase/zookeeper</value> </property>

- Setting up Hbase root directory in this property

- For distributed set up we have to set this property

- ZooKeeper quorum property should be set up here

- Replication set up done in this property. By default we are placing replication as 1.In the fully distributed mode, multiple data nodes present so we can increase replication by placing more than 1 value in the dfs.replication property

- Client port should be mentioned in this property

- ZooKeeper data directory can be mentioned in this property

Step 6) Start Hadoop daemons first and after that start HBase daemons as shown below

Here first you have to start Hadoop daemons by using“./start-all.sh” command as shown in below.

After starting Hbase daemons by hbase-start.sh

Now check jps

HBase Fully Distributed Mode Installation

- This set up will work in Hadoop cluster mode where multiple nodes spawn across the cluster and running.

- The installation is same as pseudo distributed mode; the only difference is that it will spawn across multiple nodes.

- The configurations files mentioned in HBase-site.xml and hbase-env.sh is same as mentioned in pseudo mode.

HBase Installation Troubleshooting

1) Problem Statement: Master server initializes but region servers not initializes

The Communication between Master and region servers through their IP addresses. Like the way Master is going to listen that region servers are running or having the IP address of 127.0.0.1. The IP address 127.0.0.1 which is the local host and resolves to the master server own local host.

Cause:

In dual communication between region servers and master, region server continuously informs Master server about their IP addresses are 127.0.0.1.

Solution:

- Have to remove master server name node from local host that is present in hosts file

- Host file location /etc/hosts

What to change:

Open /etc./hosts and go to this location

127.0.0.1 fully.qualified.regionservernameregionservername localhost.localdomain localhost : : 1 localhost3.localdomain3 localdomain3

Modify the above configuration like below (remove region server name as highlighted above)

127.0.0.1 localhost.localdomainlocalhost : : 1 localhost3.localdomain3 localdomain3

2) Problem Statement: Couldn’t find my address: XYZ in list of Zookeeper quorum servers

Cause:

- ZooKeeper server was not able to start, and it will throw an error like .xyz in the name of the server.

- HBase attempts to start a ZooKeeper server on some machine but at the same time machine is not able to find itself the quorum configuration i.e. present in HBase.zookeeper.quorum configuration file.

Solution:-

- Have to replace the host name with a hostname that is presented in the error message

- Suppose we are having DNS server then we can set the below configurations in HBase-site.xml.

- HBase.zookeeper.dns.interface

- HBase.zookeeper.dns.nameserver

3) Problem Statement: Created Root Directory for HBase through Hadoop DFS

- Master says that you need to run the HBase migrations script.

- Upon running that, the HBase migrations script respond like no files in root directory.

Cause:

- Creation of new directory for HBase using Hadoop Distributed file system

- Here HBase expects two possibilities

1) Root directory not to exist

2) HBase previous running instance initialized before

Solution:

- Make conformity the HBase root directory does not currently exist or has been initialized by a previous run of HBase instance.

- As a part of solution, we have to follow steps

Step 1) Using Hadoop dfs to delete the HBase root directory

Step 2) HBase creates and initializes the directory by itself

4) Problem statement: Zookeeper session expired events

Cause:

- HMaster or HRegion servers shutting down by throwing Exceptions

- If we observe logs, we can find out the actual exceptions that thrown

The following shows the exception thrown because of Zookeeper expired event. The highlighted events are some of the exceptions occurred in log file

Log files code as display below:

WARN org.apache.zookeeper.ClientCnxn: Exception closing session 0x278bd16a96000f to sun.nio.ch.SelectionKeyImpl@355811ec java.io.IOException: TIMED OUT at org.apache.zookeeper.ClientCnxn$SendThread.run(ClientCnxn.java:906) WARN org.apache.hadoop.hbase.util.Sleeper: We slept 79410ms, ten times longer than scheduled: 5000 INFO org.apache.zookeeper.ClientCnxn: Attempting connection to server hostname/IP:PORT INFO org.apache.zookeeper.ClientCnxn: Priming connection to java.nio.channels.SocketChannel[connected local=/IP:PORT remote=hostname/IP:PORT] INFO org.apache.zookeeper.ClientCnxn: Server connection successful WARN org.apache.zookeeper.ClientCnxn: Exception closing session 0x278bd16a96000d to sun.nio.ch.SelectionKeyImpl@3544d65e java.io.IOException: Session Expired at org.apache.zookeeper.ClientCnxn$SendThread.readConnectResult(ClientCnxn.java:589) at org.apache.zookeeper.ClientCnxn$SendThread.doIO(ClientCnxn.java:709) at org.apache.zookeeper.ClientCnxn$SendThread.run(ClientCnxn.java:945) ERROR org.apache.hadoop.hbase.regionserver.HRegionServer: ZooKeeper session expired

Solution:

- The default RAM size is 1 GB. For doing long running imports, we have maintained RAM capacity more than 1 GB.

- Have to increase the session timeout for Zookeeper.

- For increasing session time out of Zookeeper, we have to modify the following property in “hbase-site.xml” that present in hbase /conf folder path.

- The default session timeout is 60 seconds. We can change it to 120 seconds as mentioned below

<property>

<name> zookeeper.session.timeout </name>

<value>1200000</value>

</property>

<property>

<name> hbase.zookeeper.property.tickTime </name>

<value>6000</value>

</property>