Model Seq2seq (sekvence k sekvenci) s PyTorch

Co je NLP?

NLP neboli zpracování přirozeného jazyka je jednou z populárních odvětví umělé inteligence, která pomáhá počítačům porozumět, manipulovat nebo reagovat na člověka v jejich přirozeném jazyce. NLP je motorem vzadu Google Translate což nám pomáhá porozumět jiným jazykům.

Co je Seq2Seq?

Seq2Seq je metoda strojového překladu a jazykového zpracování založeného na kodéru a dekodéru, která mapuje vstup sekvence na výstup sekvence s hodnotou tagu a pozornosti. Cílem je použít 2 RNN, které budou spolupracovat se speciálním tokenem a pokusí se předpovědět další stavovou sekvenci z předchozí sekvence.

Jak předvídat sekvenci z předchozí sekvence

Následují kroky pro predikci sekvence z předchozí sekvence pomocí PyTorch.

Krok 1) Načtení našich dat

Pro naši datovou sadu použijete datovou sadu z Dvojjazyčné větné dvojice oddělené tabulátory. Zde použiji dataset z angličtiny do indonéštiny. Můžete si vybrat cokoli, ale nezapomeňte změnit název souboru a adresář v kódu.

from __future__ import unicode_literals, print_function, division

import torch

import torch.nn as nn

import torch.optim as optim

import torch.nn.functional as F

import numpy as np

import pandas as pd

import os

import re

import random

device = torch.device("cuda" if torch.cuda.is_available() else "cpu")

Krok 2) Příprava dat

Dataset nelze použít přímo. Musíte rozdělit věty na slova a převést je na One-Hot Vector. Každé slovo bude jedinečně indexováno ve třídě Lang, aby se vytvořil slovník. Třída Lang uloží každou větu a rozdělí ji slovo po slovu pomocí addSentence. Poté vytvořte slovník indexováním každého neznámého slova pro Sequence do sekvenčních modelů.

SOS_token = 0

EOS_token = 1

MAX_LENGTH = 20

#initialize Lang Class

class Lang:

def __init__(self):

#initialize containers to hold the words and corresponding index

self.word2index = {}

self.word2count = {}

self.index2word = {0: "SOS", 1: "EOS"}

self.n_words = 2 # Count SOS and EOS

#split a sentence into words and add it to the container

def addSentence(self, sentence):

for word in sentence.split(' '):

self.addWord(word)

#If the word is not in the container, the word will be added to it,

#else, update the word counter

def addWord(self, word):

if word not in self.word2index:

self.word2index[word] = self.n_words

self.word2count[word] = 1

self.index2word[self.n_words] = word

self.n_words += 1

else:

self.word2count[word] += 1

Třída Lang je třída, která nám pomůže vytvořit slovník. Pro každý jazyk bude každá věta rozdělena na slova a poté přidána do kontejneru. Každý kontejner uloží slova do příslušného rejstříku, spočítá slovo a přidá rejstřík slova, abychom jej mohli použít k nalezení rejstříku slova nebo vyhledání slova z jeho rejstříku.

Protože naše data jsou oddělena TAB, musíte použít pandy jako náš zavaděč dat. Pandy přečtou naše data jako dataFrame a rozdělí je na naši zdrojovou a cílovou větu. Za každou větu, kterou máš,

- budete to normalizovat na malá písmena,

- odstranit všechny neznakové

- převést na ASCII z Unicode

- rozdělte věty, abyste v ní měli každé slovo.

#Normalize every sentence

def normalize_sentence(df, lang):

sentence = df[lang].str.lower()

sentence = sentence.str.replace('[^A-Za-z\s]+', '')

sentence = sentence.str.normalize('NFD')

sentence = sentence.str.encode('ascii', errors='ignore').str.decode('utf-8')

return sentence

def read_sentence(df, lang1, lang2):

sentence1 = normalize_sentence(df, lang1)

sentence2 = normalize_sentence(df, lang2)

return sentence1, sentence2

def read_file(loc, lang1, lang2):

df = pd.read_csv(loc, delimiter='\t', header=None, names=[lang1, lang2])

return df

def process_data(lang1,lang2):

df = read_file('text/%s-%s.txt' % (lang1, lang2), lang1, lang2)

print("Read %s sentence pairs" % len(df))

sentence1, sentence2 = read_sentence(df, lang1, lang2)

source = Lang()

target = Lang()

pairs = []

for i in range(len(df)):

if len(sentence1[i].split(' ')) < MAX_LENGTH and len(sentence2[i].split(' ')) < MAX_LENGTH:

full = [sentence1[i], sentence2[i]]

source.addSentence(sentence1[i])

target.addSentence(sentence2[i])

pairs.append(full)

return source, target, pairs

Další užitečnou funkcí, kterou využijete, je převod párů na Tensor. To je velmi důležité, protože naše síť čte pouze data typu tenzor. Je to také důležité, protože to je ta část, že na každém konci věty bude token, který sdělí síti, že vstup je dokončen. Pro každé slovo ve větě získá index z příslušného slova ve slovníku a na konec věty přidá token.

def indexesFromSentence(lang, sentence):

return [lang.word2index[word] for word in sentence.split(' ')]

def tensorFromSentence(lang, sentence):

indexes = indexesFromSentence(lang, sentence)

indexes.append(EOS_token)

return torch.tensor(indexes, dtype=torch.long, device=device).view(-1, 1)

def tensorsFromPair(input_lang, output_lang, pair):

input_tensor = tensorFromSentence(input_lang, pair[0])

target_tensor = tensorFromSentence(output_lang, pair[1])

return (input_tensor, target_tensor)

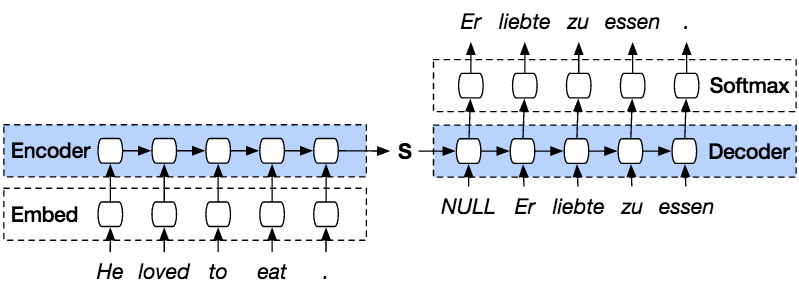

Model Seq2Seq

PyTorModel Seq2seq je druh modelu, který používá PyTorch kodér a dekodér nad modelem. Kodér zakóduje větu slovo po slově do indexu slovní zásoby nebo známých slov s indexem a dekodér predikuje výstup kódovaného vstupu dekódováním vstupu v sekvenci a pokusí se použít poslední vstup jako další vstup, pokud je to možné. Touto metodou je také možné predikovat další vstup pro vytvoření věty. Každé větě bude přiřazen token označující konec sekvence. Na konci predikce bude také token označující konec výstupu. Z kodéru tedy předá dekodéru stav pro predikci výstupu.

Encoder zakóduje naši vstupní větu slovo po slovu v pořadí a na konci bude token pro označení konce věty. Kodér se skládá z vrstvy Embedding a vrstvy GRU. Vrstva Embedding je vyhledávací tabulka, která ukládá vložení našeho vstupu do slovníku slov s pevnou velikostí. Bude předán vrstvě GRU. Vrstva GRU je Gated Recurrent Unit, která se skládá z několika typů vrstev RNN který vypočítá sekvenční vstup. Tato vrstva vypočítá skrytý stav z předchozího a aktualizuje reset, aktualizaci a nové brány.

Dekodér dekóduje vstup z výstupu kodéru. Pokusí se předpovědět další výstup a pokusí se jej použít jako další vstup, pokud je to možné. Dekodér se skládá z vrstvy vkládání, vrstvy GRU a lineární vrstvy. Vložená vrstva vytvoří vyhledávací tabulku pro výstup a předá ji do vrstvy GRU pro výpočet předpokládaného stavu výstupu. Poté lineární vrstva pomůže vypočítat aktivační funkci pro určení skutečné hodnoty předpokládaného výstupu.

class Encoder(nn.Module):

def __init__(self, input_dim, hidden_dim, embbed_dim, num_layers):

super(Encoder, self).__init__()

#set the encoder input dimesion , embbed dimesion, hidden dimesion, and number of layers

self.input_dim = input_dim

self.embbed_dim = embbed_dim

self.hidden_dim = hidden_dim

self.num_layers = num_layers

#initialize the embedding layer with input and embbed dimention

self.embedding = nn.Embedding(input_dim, self.embbed_dim)

#intialize the GRU to take the input dimetion of embbed, and output dimention of hidden and

#set the number of gru layers

self.gru = nn.GRU(self.embbed_dim, self.hidden_dim, num_layers=self.num_layers)

def forward(self, src):

embedded = self.embedding(src).view(1,1,-1)

outputs, hidden = self.gru(embedded)

return outputs, hidden

class Decoder(nn.Module):

def __init__(self, output_dim, hidden_dim, embbed_dim, num_layers):

super(Decoder, self).__init__()

#set the encoder output dimension, embed dimension, hidden dimension, and number of layers

self.embbed_dim = embbed_dim

self.hidden_dim = hidden_dim

self.output_dim = output_dim

self.num_layers = num_layers

# initialize every layer with the appropriate dimension. For the decoder layer, it will consist of an embedding, GRU, a Linear layer and a Log softmax activation function.

self.embedding = nn.Embedding(output_dim, self.embbed_dim)

self.gru = nn.GRU(self.embbed_dim, self.hidden_dim, num_layers=self.num_layers)

self.out = nn.Linear(self.hidden_dim, output_dim)

self.softmax = nn.LogSoftmax(dim=1)

def forward(self, input, hidden):

# reshape the input to (1, batch_size)

input = input.view(1, -1)

embedded = F.relu(self.embedding(input))

output, hidden = self.gru(embedded, hidden)

prediction = self.softmax(self.out(output[0]))

return prediction, hidden

class Seq2Seq(nn.Module):

def __init__(self, encoder, decoder, device, MAX_LENGTH=MAX_LENGTH):

super().__init__()

#initialize the encoder and decoder

self.encoder = encoder

self.decoder = decoder

self.device = device

def forward(self, source, target, teacher_forcing_ratio=0.5):

input_length = source.size(0) #get the input length (number of words in sentence)

batch_size = target.shape[1]

target_length = target.shape[0]

vocab_size = self.decoder.output_dim

#initialize a variable to hold the predicted outputs

outputs = torch.zeros(target_length, batch_size, vocab_size).to(self.device)

#encode every word in a sentence

for i in range(input_length):

encoder_output, encoder_hidden = self.encoder(source[i])

#use the encoder’s hidden layer as the decoder hidden

decoder_hidden = encoder_hidden.to(device)

#add a token before the first predicted word

decoder_input = torch.tensor([SOS_token], device=device) # SOS

#topk is used to get the top K value over a list

#predict the output word from the current target word. If we enable the teaching force, then the #next decoder input is the next word, else, use the decoder output highest value.

for t in range(target_length):

decoder_output, decoder_hidden = self.decoder(decoder_input, decoder_hidden)

outputs[t] = decoder_output

teacher_force = random.random() < teacher_forcing_ratio

topv, topi = decoder_output.topk(1)

input = (target[t] if teacher_force else topi)

if(teacher_force == False and input.item() == EOS_token):

break

return outputs

Krok 3) Trénink modelu

Tréninkový proces v modelech Seq2seq začíná převodem každé dvojice vět na tenzory z jejich Lang indexu. Náš model sekvence k sekvenci bude používat SGD jako optimalizátor a funkci NLLLoss pro výpočet ztrát. Tréninkový proces začíná vložením dvojice vět do modelu, aby se předpověděl správný výstup. V každém kroku bude výstup z modelu vypočítán pomocí pravdivých slov, aby se našly ztráty a aktualizovaly se parametry. Takže protože použijete 75000 75000 iterací, náš model sekvence k sekvenci vygeneruje náhodných XNUMX XNUMX párů z naší datové sady.

teacher_forcing_ratio = 0.5

def clacModel(model, input_tensor, target_tensor, model_optimizer, criterion):

model_optimizer.zero_grad()

input_length = input_tensor.size(0)

loss = 0

epoch_loss = 0

# print(input_tensor.shape)

output = model(input_tensor, target_tensor)

num_iter = output.size(0)

print(num_iter)

#calculate the loss from a predicted sentence with the expected result

for ot in range(num_iter):

loss += criterion(output[ot], target_tensor[ot])

loss.backward()

model_optimizer.step()

epoch_loss = loss.item() / num_iter

return epoch_loss

def trainModel(model, source, target, pairs, num_iteration=20000):

model.train()

optimizer = optim.SGD(model.parameters(), lr=0.01)

criterion = nn.NLLLoss()

total_loss_iterations = 0

training_pairs = [tensorsFromPair(source, target, random.choice(pairs))

for i in range(num_iteration)]

for iter in range(1, num_iteration+1):

training_pair = training_pairs[iter - 1]

input_tensor = training_pair[0]

target_tensor = training_pair[1]

loss = clacModel(model, input_tensor, target_tensor, optimizer, criterion)

total_loss_iterations += loss

if iter % 5000 == 0:

avarage_loss= total_loss_iterations / 5000

total_loss_iterations = 0

print('%d %.4f' % (iter, avarage_loss))

torch.save(model.state_dict(), 'mytraining.pt')

return model

Krok 4) Otestujte model

Proces hodnocení Seq2seq PyTorÚkolem je zkontrolovat výstup modelu. Každá dvojice modelů Sequence-to-Sequence bude vložena do modelu a vygeneruje predikovaná slova. Poté se podíváte na nejvyšší hodnotu u každého výstupu, abyste našli správný index. A nakonec porovnáte naši predikci modelu se skutečnou větou.

def evaluate(model, input_lang, output_lang, sentences, max_length=MAX_LENGTH):

with torch.no_grad():

input_tensor = tensorFromSentence(input_lang, sentences[0])

output_tensor = tensorFromSentence(output_lang, sentences[1])

decoded_words = []

output = model(input_tensor, output_tensor)

# print(output_tensor)

for ot in range(output.size(0)):

topv, topi = output[ot].topk(1)

# print(topi)

if topi[0].item() == EOS_token:

decoded_words.append('<EOS>')

break

else:

decoded_words.append(output_lang.index2word[topi[0].item()])

return decoded_words

def evaluateRandomly(model, source, target, pairs, n=10):

for i in range(n):

pair = random.choice(pairs)

print(‘source {}’.format(pair[0]))

print(‘target {}’.format(pair[1]))

output_words = evaluate(model, source, target, pair)

output_sentence = ' '.join(output_words)

print(‘predicted {}’.format(output_sentence))

Nyní začněme naše školení se Seq to Seq, s počtem iterací 75000 1 a počtem RNN vrstvy 512 se skrytou velikostí XNUMX.

lang1 = 'eng'

lang2 = 'ind'

source, target, pairs = process_data(lang1, lang2)

randomize = random.choice(pairs)

print('random sentence {}'.format(randomize))

#print number of words

input_size = source.n_words

output_size = target.n_words

print('Input : {} Output : {}'.format(input_size, output_size))

embed_size = 256

hidden_size = 512

num_layers = 1

num_iteration = 100000

#create encoder-decoder model

encoder = Encoder(input_size, hidden_size, embed_size, num_layers)

decoder = Decoder(output_size, hidden_size, embed_size, num_layers)

model = Seq2Seq(encoder, decoder, device).to(device)

#print model

print(encoder)

print(decoder)

model = trainModel(model, source, target, pairs, num_iteration)

evaluateRandomly(model, source, target, pairs)

Jak můžete vidět, naše předpokládaná věta se příliš neshoduje, takže abyste získali vyšší přesnost, musíte trénovat s mnohem více daty a zkusit přidat další iterace a počet vrstev pomocí Sequence k sekvenčnímu učení.

random sentence ['tom is finishing his work', 'tom sedang menyelesaikan pekerjaannya']

Input : 3551 Output : 4253

Encoder(

(embedding): Embedding(3551, 256)

(gru): GRU(256, 512)

)

Decoder(

(embedding): Embedding(4253, 256)

(gru): GRU(256, 512)

(out): Linear(in_features=512, out_features=4253, bias=True)

(softmax): LogSoftmax()

)

Seq2Seq(

(encoder): Encoder(

(embedding): Embedding(3551, 256)

(gru): GRU(256, 512)

)

(decoder): Decoder(

(embedding): Embedding(4253, 256)

(gru): GRU(256, 512)

(out): Linear(in_features=512, out_features=4253, bias=True)

(softmax): LogSoftmax()

)

)

5000 4.0906

10000 3.9129

15000 3.8171

20000 3.8369

25000 3.8199

30000 3.7957

35000 3.8037

40000 3.8098

45000 3.7530

50000 3.7119

55000 3.7263

60000 3.6933

65000 3.6840

70000 3.7058

75000 3.7044

> this is worth one million yen

= ini senilai satu juta yen

< tom sangat satu juta yen <EOS>

> she got good grades in english

= dia mendapatkan nilai bagus dalam bahasa inggris

< tom meminta nilai bagus dalam bahasa inggris <EOS>

> put in a little more sugar

= tambahkan sedikit gula

< tom tidak <EOS>

> are you a japanese student

= apakah kamu siswa dari jepang

< tom kamu memiliki yang jepang <EOS>

> i apologize for having to leave

= saya meminta maaf karena harus pergi

< tom tidak maaf karena harus pergi ke

> he isnt here is he

= dia tidak ada di sini kan

< tom tidak <EOS>

> speaking about trips have you ever been to kobe

= berbicara tentang wisata apa kau pernah ke kobe

< tom tidak <EOS>

> tom bought me roses

= tom membelikanku bunga mawar

< tom tidak bunga mawar <EOS>

> no one was more surprised than tom

= tidak ada seorangpun yang lebih terkejut dari tom

< tom ada orang yang lebih terkejut <EOS>

> i thought it was true

= aku kira itu benar adanya

< tom tidak <EOS>